Key Takeaways

Tracking nutrition requires precise measurements, but carrying a physical kitchen tool everywhere is impractical. Digital scale apps have emerged as a convenient alternative, yet understanding how their computer vision weight measurement affects your caloric deficit is essential before relying on them in 2026.

Are phone scale apps accurate?

Phone camera measurement tools are moderately precise, with ideal laboratory conditions yielding minor error margins for simple, distinct foods. They are excellent for approximate daily tracking but cannot match the precision of a physical calibrated device.

According to Automated Food Weight and Content Estimation Using Computer Vision and AI Algorithms (Gonzalez et al., Sensors 2024), AI system validation using rice and chicken yielded error margins of 5.07% and 3.75%. These represent best-case scenarios under perfect lighting with simple, unmixed ingredients.

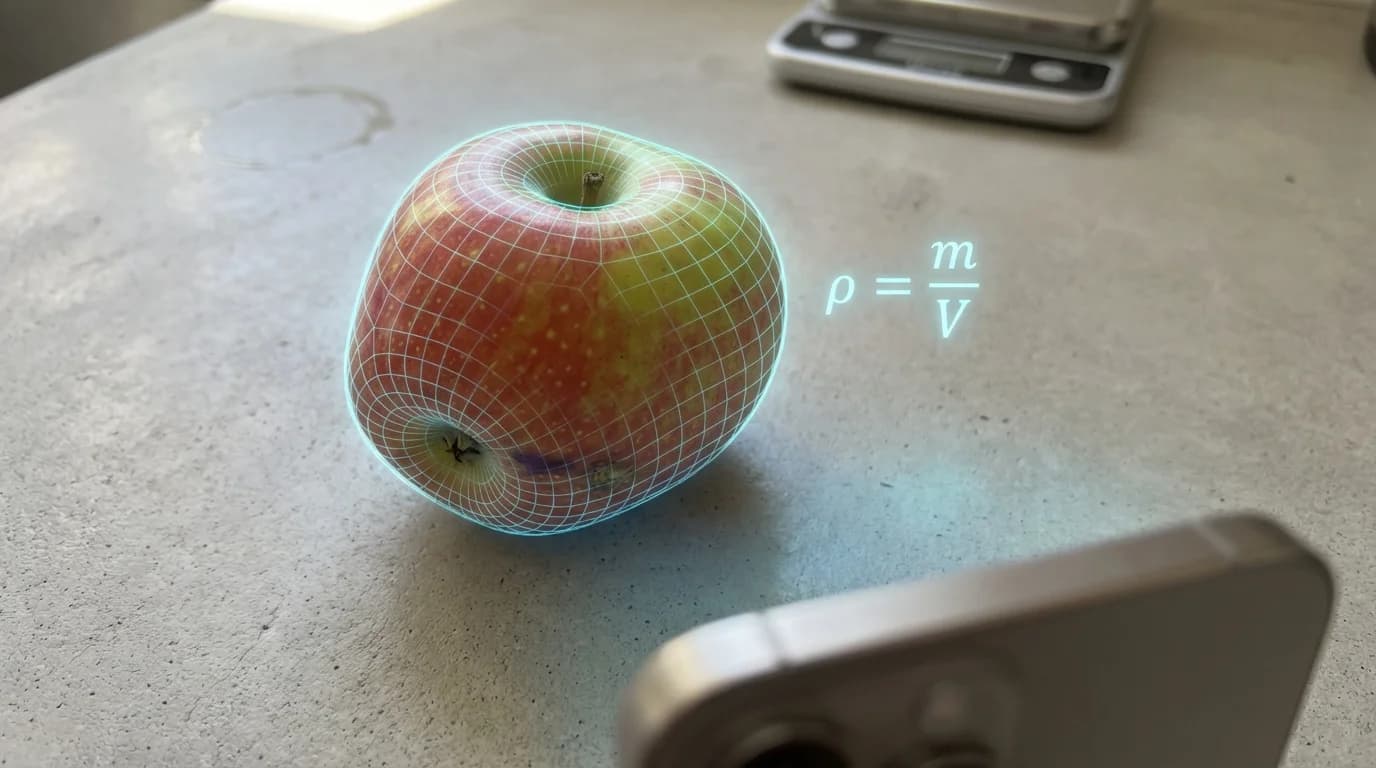

Computer vision weight measurement relies on analyzing your meal's visual footprint. The software uses your device lens to map physical dimensions, then cross-references them with known density metrics for that recognized ingredient.

Complex meals introduce significantly higher variability because the camera cannot accurately determine depth or hidden ingredients. Therefore, a mobile application is best used as an estimation tool. Always consult a healthcare professional for specific medical dietary management.

Can you use your phone as a food scale?

You can use your smartphone to estimate food portions visually, but it does not function as a physical weighing surface. The device utilizes advanced artificial intelligence to calculate estimated volume and apply density multipliers rather than measuring actual gravitational mass.

According to Automated Food Weight and Content Estimation Using Computer Vision and AI Algorithms: Phase 2 (Gonzalez et al., Sensors 2025), Phase 2 confirmed the same error margins (5.07% for rice, 3.75% for chicken) from the original study, validating the methodology across real-world settings like corporate dining halls. This deployment proves that camera-based estimation is viable for everyday, casual tracking.

As Dr. Gonzalez, lead researcher at MDPI Sensors, explains: "The weight estimation procedure combines computer vision techniques to measure food volume using both RGB and depth cameras, then applies density models specific to each food type." This process is entirely visual and mathematical.

Our comprehensive Digital Scale Apps: Can You Use Your Phone As A Food Scale? (2026 Guide) explores these hardware limitations. Your smartphone screen lacks the external pressure sensors to weigh items directly. Placing an apple on your screen will only potentially scratch your glass.

How to measure grams without a scale?

To measure grams without a physical device, you must rely on volumetric food measurement combined with established density databases. AI estimation platforms automate this process by scanning your meal and calculating the estimated mass based on detected size.

According to Applying Image-Based Food-Recognition Systems on Dietary Assessment: A Systematic Review (Dalakleidi et al., Advances in Nutrition 2022), 45 (58%) of the included studies adopted deep learning methods, especially convolutional neural networks (CNNs), to tackle this estimation challenge. These networks are trained on thousands of reference images to understand exactly how different foods look at various weights.

When lacking a kitchen tool, common household objects provide rough visual comparisons. A deck of playing cards roughly equals 85 grams of cooked meat, while a baseball approximates 150 grams of whole fruit. However, these mental shortcuts are highly subjective and prone to severe human error.

For those strictly monitoring intake, How To Measure Without A Scale For Macros (2026) provides actionable strategies. Modern smartphone applications bridge the gap between unreliable visual guessing and exact physical weighing.

How much does this weigh on a phone app?

The returned weight depends entirely on the app's specific image recognition algorithm and internal density database. Results deviate widely, sometimes showing extreme margins of error for complex or layered meals.

According to AI-based digital image dietary assessment methods compared to humans and ground truth: a systematic review (Shonkoff et al., Ann Med 2023), average overall relative errors (AI vs. ground truth) ranged from 0.10% to 38.3% for calories across 52 studies. This massive data range indicates that the specific food item being measured heavily dictates the accuracy of the outcome.

If you want to know Which Digital Scale Apps Work? How To Weigh Without A Scale (2026), understand that distinct items like a banana score much better. Mixed meals confuse depth sensors, leading to higher inaccuracies.

Always verify the classification before accepting the final number. Correcting the food label manually significantly reduces that 38.3% upper error limit and keeps tracking reliable.

Can I weigh something on my phone?

You cannot weigh objects directly on your smartphone screen because devices lack external load cells calibrated for mass. Software claiming to turn your screen into a functional pressure pad is providing a novelty simulation.

According to Carbohydrate Estimation Accuracy of Two Commercially Available Smartphone Applications vs Estimation by Individuals With Type 1 Diabetes (Baumgartner et al., J Diabetes Sci Technol 2025), the app Calorie Mama had a mean absolute carbohydrate estimation error of 24 ± 36.5 g (81.2 ± 123.4%) — a relative error exceeding 80% on average.

As Dr. Baumgartner notes: "Human estimators had a mean absolute error of 21 ± 21.5 grams, meaning the best commercial AI app still outperformed human estimation in controlled settings."

If you are attempting to calculate exact dosages for insulin or manage strict dietary medical conditions, you must consult healthcare professionals. A physical, calibrated device is strictly mandatory for these precise use cases.

How do AI camera scale inaccuracies affect daily calories?

Estimation errors compound heavily throughout the day, potentially shifting your total daily intake by several hundred calories. This variance can easily erase a modest caloric deficit margin of error, stalling physical progress for those monitoring their body composition.

According to Pooled results from 5 validation studies of dietary self-report instruments using recovery biomarkers for energy and protein intake (Freedman et al., Am J Epidemiol 2014), the average rate of under-reporting of energy intake was 15% with a single 24-hour recall. Humans are notoriously bad at remembering and estimating portion sizes accurately on their own.

Introducing an AI tool with a 5% to 38% error rate compounds initial inaccuracies. For a 2,000-calorie daily target, a 15% error equals 300 undocumented calories, which completely nullifies a standard 250-calorie deficit.

To stay updated on which tools minimize this gap, check out What Are The Newest AI Phone Scale Apps For 2026?. New platforms utilize LiDAR and depth sensors to map volume much more precisely than standard 2D photos.

What are the best digital food scale apps in 2026?

The best digital estimation platforms in 2026 combine convolutional neural networks with user-friendly manual correction tools. Top-tier tools integrate depth-sensing technology to improve spatial volume mapping and reduce baseline calculation errors.

According to the systematic review by Dalakleidi et al. (Advances in Nutrition 2022), out of 159 studies screened, deep learning methods consistently outperform all other approaches on large publicly available food datasets. The applications that utilize these advanced structures naturally provide vastly superior baseline estimations.

Here is a functional comparison of top-tier visual estimation tools available this year:

| App Name | Measurement Method | Best For | AI Accuracy Focus |

|---|---|---|---|

| SNAQ | 3D Depth + CNN | Diabetics needing quick carb estimates | Moderate to High |

| MacroFactor | Visual + Database | Athletes tracking macros strictly | High (Manual input) |

| FoodVisor | 2D Photo Scan | Casual dieters needing visual logging | Moderate |

| Calorie Mama | 2D Classification | Quick, single-item recognition | Low to Moderate |

SNAQ is best for tracking complex macros because it utilizes 3D depth data to map food volume. MacroFactor excels for strict tracking because it focuses on user-adjusted inputs and dynamic expenditure algorithms rather than pure automated visual scanning.

Frequently Asked Questions

Can an app weigh food in grams exactly?

No application can measure exact physical mass. They estimate weight by calculating the visual volume of the food and applying specific density multipliers.

Do I need a physical scale for macronutrient tracking?

While not strictly mandatory for casual tracking, a physical device is highly recommended. Using a camera tool introduces a margin of error that can easily erase a caloric deficit.

How does computer vision estimate food weight?

The software uses your device camera to map the spatial dimensions of an item. It then cross-references this estimated volume with a database of known densities for that specific ingredient.

What foods are hardest for AI to measure?

Complex mixed meals, layered dishes, and liquids are incredibly difficult for algorithms to process. The camera cannot see hidden ingredients or determine the true depth of a dense stew.

Sources

- Automated Food Weight and Content Estimation Using Computer Vision and AI Algorithms (Gonzalez et al., Sensors 2024)

- Automated Food Weight and Content Estimation Using Computer Vision and AI Algorithms: Phase 2 (Gonzalez et al., Sensors 2025)

- Carbohydrate Estimation Accuracy of Two Commercially Available Smartphone Applications vs Estimation by Individuals With Type 1 Diabetes (Baumgartner et al., J Diabetes Sci Technol 2025)

- AI-based digital image dietary assessment methods compared to humans and ground truth: a systematic review (Shonkoff et al., Ann Med 2023)

- Pooled results from 5 validation studies of dietary self-report instruments using recovery biomarkers for energy and protein intake (Freedman et al., Am J Epidemiol 2014)

- Applying Image-Based Food-Recognition Systems on Dietary Assessment: A Systematic Review (Dalakleidi et al., Advances in Nutrition 2022)